Second provenance challenge

Model Integration Results

We have successfully performed the queries using data from VisTrails, MyGrid and Southampton (TBD insert links). We have included our own system because our new query API is general and not native to VisTrails. Our wor is described in our Second Provenance challenge paper.

Model comparison

The VisTrails and MyGrid models were easy to use because of their simple data format, The generalized model of Southampton presented a greater challenge because of the many levels of nesting and abstractions. VisTrails required both the execution log and the workflow definition for the provenance queries whereas MyGrid and Southampton only needed the execution log. Finally, VisTrails supports a third level of provenance--the workflow evolution layer, and while we have not used it for this API, it has many benefits when asking queries about differences between workflows.

The answers obtained varied depending which information you had access to. E.g. for VisTrails it was not possible to obtain intermediate data items because they are not recorded. In this case the closest answer was the module executions. The queries required the data to contain at least module executions, connections between them and required annotations. These were all present in the models except Southampton who lacked some annotations.

VisTrails use a normalized data model and needs to use both execution log and workflow definition. MyGrids execution log can be used without using the workflow definition and contain derivation relationships between data items, this makes the data contain redundant information. Southampton is modeling some security features that may be useful but makes the data larger and more complex.

Concepts

The concept of data item varies between systems. It can be represented as the data exchanged between modules, the inputs or outputs of a workflow or a file reference passed between modules. The concept of parameters, which are used in VisTrails to modify modules, does not exist in other models. MyGrid uses something similar to edit the parameters of modules (like setting file name to save to). This concept is not clearly defined. Southampton have the concept of assertion where every module/service records its own view of the process. This concept does not exist in the other systems and is not used in our provenance queries. But it might be important for validating results.

Other concepts like modules/connections/executions are the same although most of them have different names.

Method

Our method consists of using wrappers to translates the queries between a common data model and the source data. We first define a high-level general model that captures the basic concepts of workflows and its executions. The model contains basic concepts making it possible to express queries over the different models. Secondly, we defined API functions for the wrappers that use this model. Thirdly, we implemented the wrappers and constructed the queries.

How to connect these systems? In this challenge the connections were done by hand because no common identifiers exist. The data needs to support referencing entities in other sources. E.g. If a data item is stored externally and tracked through another provenance store. Common identifiers like LSID:s might be part of the solution. External data items should also be given a namespace to indicate where they came from.

Translation Details

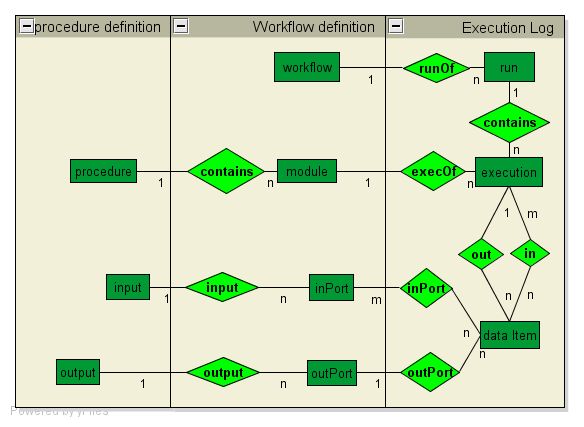

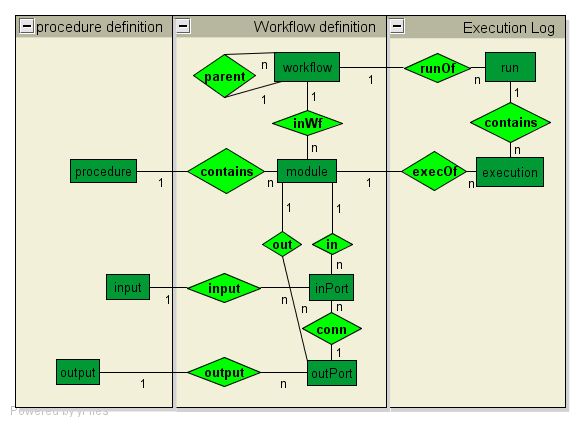

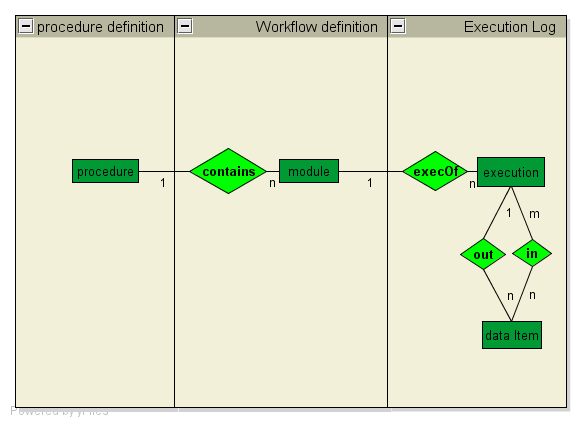

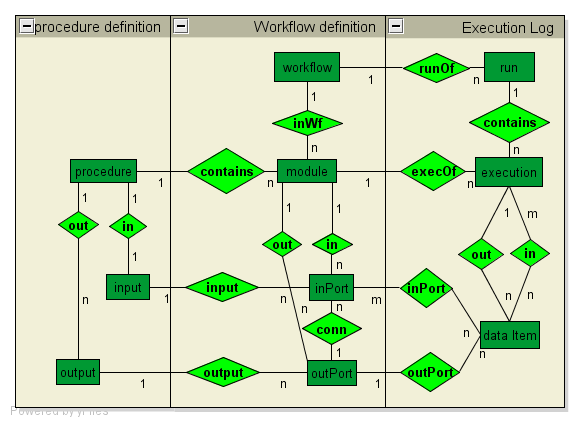

Scientific Workflow Provenance Data Model (SWPDM)

The SWPDM (shown above) is a general provenance model that aims to capture entities and relationships that are relevant to both the definition and execution of workflows. The goal is to define a general model that is able to represent provenance information obtained by different workflow systems.

The API

Our model is instantiated as a query API that operates on the concepts in the model. Vertices are modeled as objects and edges as operations on these objects. There also exists more complex operations that can traverse more than one edge which are used to model common provenance query expressions.

Implementation

This API is implemented as wrappers on top of the different data models. These wrapper functions translates the queries into a native query on the source. Currently VisTrails and Southampton uses XML with XPath as the access method. In this case the queries are translated into XPath expressions. MyGrid uses RDF/XML on a SPARQL server and the queries are translated into SPARQL expressions.

Benchmarks

The benchmark is done using Query 1 (Upstream of AtlasXGraphic). It is a good general upstream query that returns the module executions in the upstream. The data files are too small for a good benchmark but we have timed the queries using the different systems.

MyGrid

opn = 'urn:www.mygrid.org.uk/process#convert1_out_AtlasXGraphic' rl = pqf.getNode(pOutputPort, opn, store3.ns).getDataFromOutPort()[0].getExecutionFromOutData()[0].upstream()

1 sec

VisTrails

ar = [('outputName', 'eq', 'atlas-x.gif')]

r1 = pqf.getAllAnnotated(pModule,ar)[0].upstream()

0.1 sec

Southampton

odn = 'http://www.ipaw.info/challenge/atlas-x.gif' rl = pqf.getNode(pDataItem, odn, store3.ns).getExecutionFromOutData().upstream()

1 sec

Benchmark results

Although these times are very short, there seem to be two main factors influencing the result: The query engine used and the size of the data. VisTrails is fastest using an XPath processor and a small amount of data. The MyGrid data file is small but it uses a SPARQL server which is slower than using XPath. Southampton uses XPath but has large data files. These results includes initialization of the wrapper and some extra pre-processing for Southampton to calculate the data links. But they have at most biased the result by a factor of 2.

Further Comments

_Provide here further comments._

Conclusions

In the general case, tracking provenance through different systems is a data integration problem. But by defining a common model (SWPDM) on a restricted domain (Scientific Workflow) the difficulty is reduced to efficiency and entity resolution problems. We believe that it should be possible for the Scientific workflow community to support a model similar to the SWPDM to enable provenance to be tracked through their systems. We have showed that an API for querying this model can be built and its compatibility with three of the current systems.

Problems for discussion:

How to connect these systems? There is a need for the data to support referencing other models. E.g. If a data item is stored externally and tracked through another provenance store. Common identifiers like LSID:s might be part of the solution. External data items should also be given a namespace to indicate where they came from.

Data items, they are used in many layers and have different meanings. Is there a way to come up with common concepts.

How can a user easily express these kind of queries?